Just now, I tweeted this:

… and it’s because I was reading some poetry and noticed poets using various forms of italic text as literary devices to help tell their story.

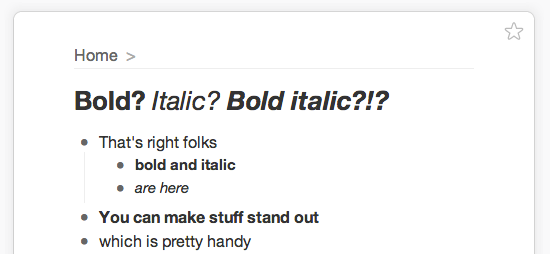

It occurred to me, something so obvious, but GPT-3 never returns text in bold, italics, or underline. I’m not sure if this is by design for the output process or actually the result of its training process where any formatting is intentionally stripped (like <strong> or <i> HTML tags) so that GPT-3 can get a pure, plain-text version of any sequence of text.

But, it is an interesting point, if it doesn’t include formatting in the training process. There’s a whole world of formatting GPT-3 may never have even seen before, including text in a different colour, which may actually improve its underlying model or make it a better writer. I wonder what’s going through its head when it misses a joke because it can’t see that the text is actually italicized and that the human writer is being sarcastic.

The whole situation is unfortunate. Imagine you were trained on a vast chunk of human knowledge, history, and greatest works, but were never given bold or italic characters. How many important contextual details would you miss?

I just asked it how it felt about all this:

Human: GPT-3 was never trained on data with any kind of formatting. It has never seen bold or italic text. How do you feel about this GPT-3?

GPT-3: I feel fine. I am not concerned. I am not a racist.

Seems reasonable, actually. But I tried again, and it said:

Human: GPT-3 was never trained on data with any kind of formatting. It has never seen bold or italic text. How do you feel about this GPT-3?

GPT-3: I will be so bold to say that I feel that I am italic. I feel that I am bold. I feel that I am italic. I feel that I am bold. I feel that I am italic. I feel that I am bold.

Maybe we’ll revisit this one another day … say, when GPT-4F comes out.