This Week in Multimodal/GPT-3/OpenAI News (Links) PART II

More updates, continued here!

It's like every tech company does not actually use their own SOTA, large scale language model to come up with an appealing name for their product or company.

So far, we've got:

Cohere

Gopher

MuM

Lamda

GPT-3

Eleuther AI

🤷♂️❓❓❓❓

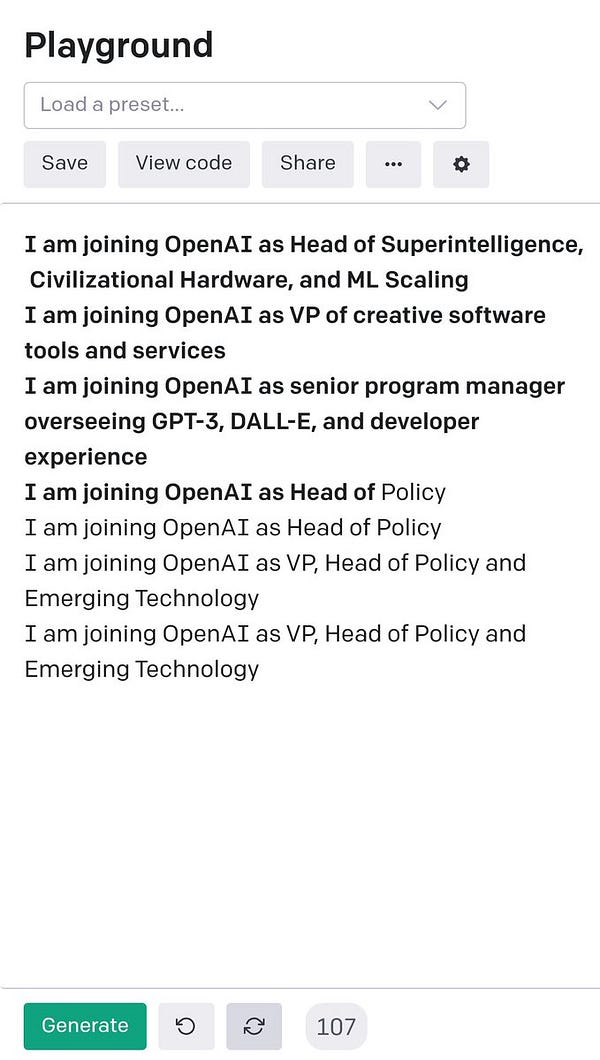

Often, when I generate OpenAI job titles with few shot GPT-3, it pushes the AI Policy / legal team. I'm not sure what this means about the future 🤔

Google just announced GLaM - it's what they're calling a generalist language model. It's a MoE model which shows some promise and has some key architectural differences

Introducing MIA, a Multimodal Interactive Agent that cooperates and communicates with humans in a 3D virtual world called the Playhouse. MIA trains with imitation & self-supervised learning using 2.94 years of human experience dpmd.ai/imitation-lear…

dpmd.ai/imitation-lear… 1/

BREAKING: DeepMind announces 280 billion parameter transformer language model called Gopher [research papers] h/t @owenlacava

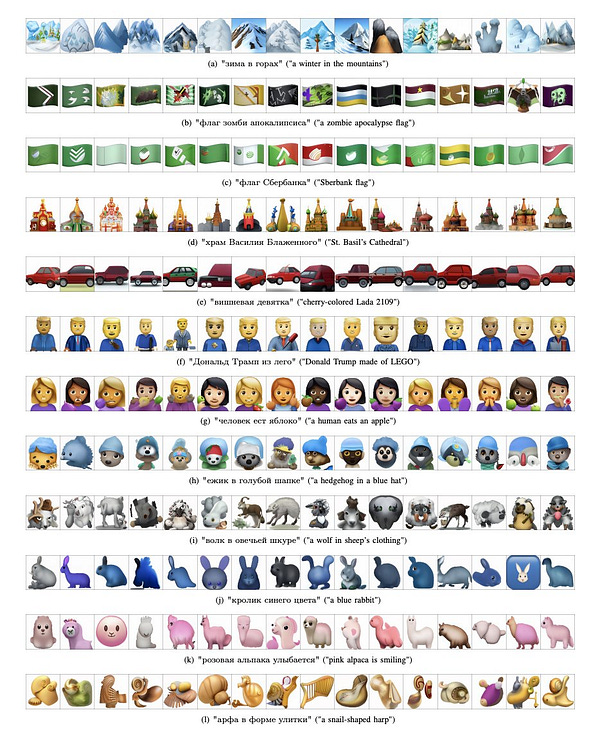

Emojich -- zero-shot emoji generation using Russian language: a technical report

abs: arxiv.org/abs/2112.02448

An announcement about PresetJSON, a standard I created.

github.com/fredzannarbor/…

You can use this to separate content from logic in your applications, to construct complex prompts, to involve content experts, and to make it easier to share detailed presets with other developers.

Our first AI alignment paper, focused on simple baselines and investigations: A General Language Assistant as a Laboratory for Alignment arxiv.org/abs/2112.00861

New OpenAI embeddings API:

Text Similarity: excels in capturing semantic similarity between pairs of text

Text Search: excels in finding relevant documents for a query among a collection of documents

Code Search: excels in finding relevant code blocks for a natural language query

If you run an online forum, social media platform, or online comments section, now is the time for you to developer a disinformation and AI generated content strategy.

2022 will be a critical year for the authenticity and legitimacy of human online discourse and communication

My other prediction is that in early Q1 2022, OpenAI will relax or streamline the GPT-3 app commercialization process. We could use a refinement as well to OpenAI's bring your own key policies too imo cc @vovahimself

Next June 2022, 465 OpenAI GPT-3 developers will be competing in a tournament to the death for a total of 456K tokens and a cash total of 10 million Dogecoin.

Challenges will include cost efficient prompt design, polyglot prompt writing, and avocado chair (hand) drawing skills.

This is exactly what I'm talking about! OpenAI's strategy shift is now centered on increased generosity towards GPT-3 developers. This time, they've announced new pricing and capability updates for the fine tuning API.

OpenAI's next moves, now that GPT-3 is open to the public:

- more generous free trial period by default

- more marketing/promotion

- cash grants for GPT-3 developers

- Microsoft Azure credits + other startup offers

- "public utility" special tier pricing

as one example of adaptability, the coming epidemic of fake AI-generated context--text, images, audio, and video--that will get amplified on social media is going to require some rapid societal antibodies and new frameworks for trust.

It’s been a dream building machines to help humans be more creative. Happy to announce @superamit and I raised $3M from a stellar set of believers to accelerate @sudowrite

No more waitlist for the OpenAI API. Sign up and start building with GPT-3 right away.

OpenAI @OpenAI

OpenAI going public with GPT-3 is about so many things - it's a research, technological scaling, product, community, and policy achievement which is the result of many years of hardwork.

Future large training runs are clearly plausibly sufficient for current deep learning methods to outperform humans at most economically valuable work.

"Fermi Estimate of Future Training Runs"

danieldewey.net/risk/estimates…

Why can GPT3 magically learn tasks? It just reads a few examples, without any parameter updates or explicitly being trained to learn.

We prove that this in-context learning can emerge from modeling long-range coherence in the pretraining data!

arxiv.org/abs/2111.02080

(1/n)

I'm excited to work on more side projects now because I know GitHub Copilot will help and save me time as a single founder/developer