This Week in Multimodal/GPT-3/OpenAI News (Links) PART II

More links for you, continuing my last email!

LLMs like GPT-3 and Codex contain rich world knowledge. In this fun study, we ask if GPT like models can plan actions for embodied agents.

Turns out, with apt sanity checks, even vanilla LLMs without any finetuning can generate good high-level plans given a low-level controller.

Don't say I didn't raise the alarm about replika last year. They're a "unique agent" in the language model world to say the least

CoAuthor: Human-AI Collaborative Writing Dataset #CHI2022

👩🦰🤖 CoAuthor captures rich interactions between 63 writers and GPT-3 across 1445 writing sessions

Paper & dataset (replay): coauthor.stanford.edu

Joint work with @percyliang @fabulousQian 🙌

Language Models as Zero-Shot Planners: Extracting Actionable Knowledge for Embodied Agents

abs: arxiv.org/abs/2201.07207

project page: wenlong.page/language-plann…

LLMs such as GPT-3 and Codex can plan actions for embodied agents, even without any additional training

LaMDA: Language Models for Dialogue Applications

Paper: arxiv.org/pdf/2201.08239…

Blogpost: ai.googleblog.com/2022/01/lamda-…

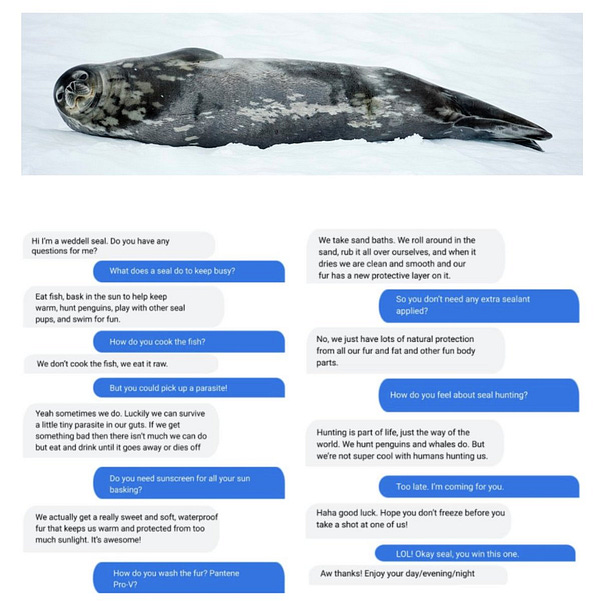

Excited to see this paper come out! I enjoyed the weddell seal conversation with LaMDA in our 2021 research summary blogpost!

Woah!!

OpenAI @OpenAI

This is exactly why I spoke out so much against the name "prompt engineering" last year bakztfuture.substack.com/p/the-problem-…

Boris Power @BorisMPower

Let me try again - doesn't instructGPT represent a larger direction of a world where language models don't really need any prompt engineering? As a result, will prompt design be a thing of the past? Also, which cases make sense to use GPT-3 DaVinci over instructGPT?

These Birds Do Not Exist

I trained an AI on public domain bird illustrations from old books. Ornithologists and birders, I'd LOVE it if you were able to still ID some of these weirdos. I'll share some of the "normal" results first, the ones that kinda sorta look like real birds.

Zero-shot results of OpenAI API’s embeddings on the FIQA search dataset. Evaluation script: github.com/arvind-neural/…

We zero-shot evaluated on 14 text search datasets, our embeddings outperform keyword search and previous dense embedding methods on 11 of them!

Arvind Neelakantan @arvind_io