This Week in Multimodal/GPT-3/OpenAI News (Links) PART I

The space has really been accelerating in 2022! I encourage you to follow me on Twitter for up to date news, but I’ve curated some notable tweets/events for the month of January 2022 below.

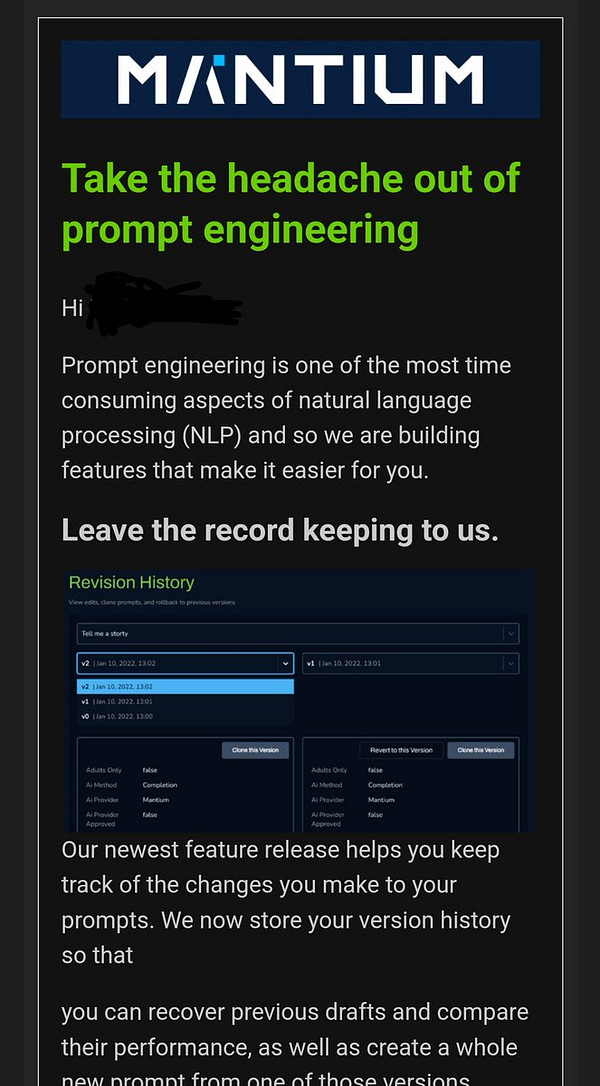

Free GPT-3 idea ...

Michael Seibel @mwseibel

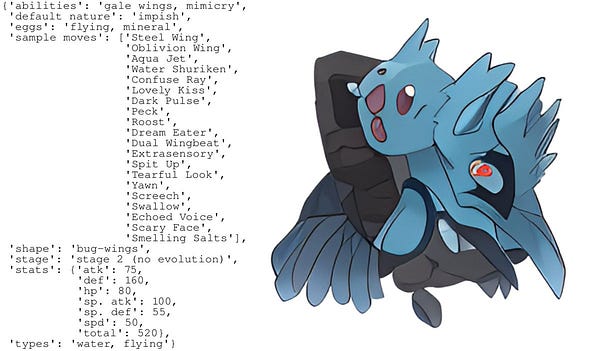

These Pokemon Do Not Exist

The fakemon AI was asked to generate "An ice and steel-type pokemon". Here's what it thinks that looks like!

#aigeneratedpokemon #LookingGlassAI

GPT-3 has a lot of hype, but also merit

GitHub Copilot has less hype but it's really creeping up on the mass market and used actively

@images_ai @BenLevinGroup @RiversHaveWings @somewheresy I'm a big Ben Levin fan, so I'll definitely take a stab!

In my experience, VQGAN&CLIP excels at dream-like unreality while CLIP-Guided Diffusion excels at unreal hyperreality.

📄 Easily my favorite paper this year, showcasing @OpenAI's Codex - for solving *advanced mathematics*! 🤯

arxiv.org/pdf/2112.15594…

"We turn questions into programming tasks; automatically generate programs; then execute them, perfectly solving university-level @MIT problems."

RuDOLPH 🦌🎄☃️: One Hyper-Modal Transformer can be creative as DALL-E and smart as CLIP

github: github.com/sberbank-ai/ru…

fast and light text-image-text transformer (350M GPT-3) designed for a quick and easy fine-tuning setup for the solution of various tasks

Someone finetuned ruDALL-E on the 3D models of Pokémon to make AI-generated Pokémon, and was able to preserve the ability to generate by type a bit better

Lots of useful tips in here for efficient CNN training!

* mixed precision

* NHWC

* stepwise learning rates

* balancing CPU/GPU data aug

* Pillow-SIMD >> Pillow

MosaicML @MosaicML

RuDOLPH (github.com/sberbank-ai/ru…) finetuned for a image2text task to predict food calories huggingface.co/spaces/AlexWor…

A new architecture for computer vision based on a sparse mixture of experts unlocks the training of large models by routing different inputs to different paths, and achieves top accuracy with about half the compute. Learn more and grab the code → goo.gle/3fiJAzY

Read all about Task-level Mixture-of-Experts (TaskMoE), a promising step towards efficiently training and deploying large models, with no loss in quality and with significantly reduced inference latency ↓